Every intervention strong enough to meaningfully reduce misinformation on a social platform is also strong enough to be called censorship. Designing moderation features for an audience primed to read moderation as an attack was the problem this project was built around.

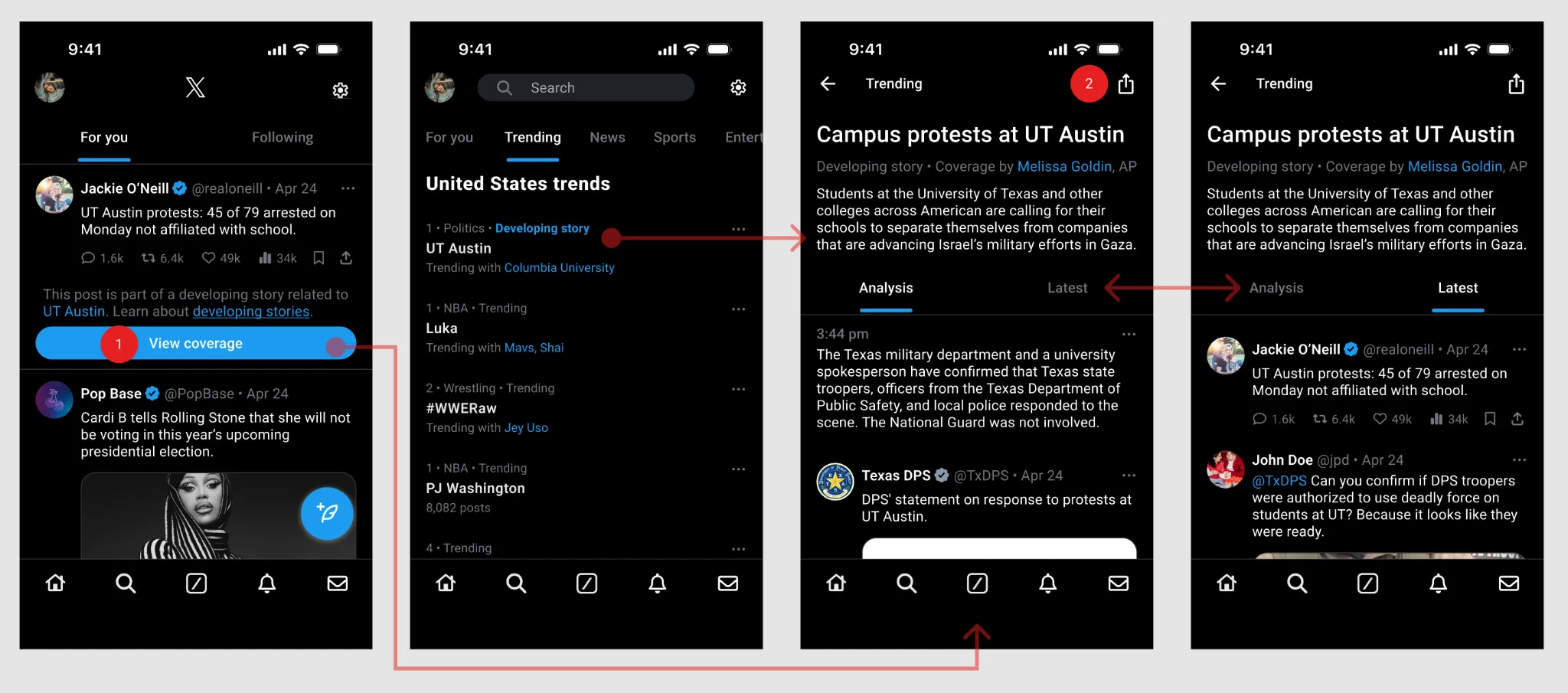

Our three-person team designed, prototyped, and tested three intervention concepts for X, each acting at a different point in the misinformation lifecycle. We anchored testing on one debunked claim — that the National Guard had been deployed to UT Austin during the spring 2024 protests — to keep the scenario concrete and charged without being partisan. The three concepts, by where they intervene:

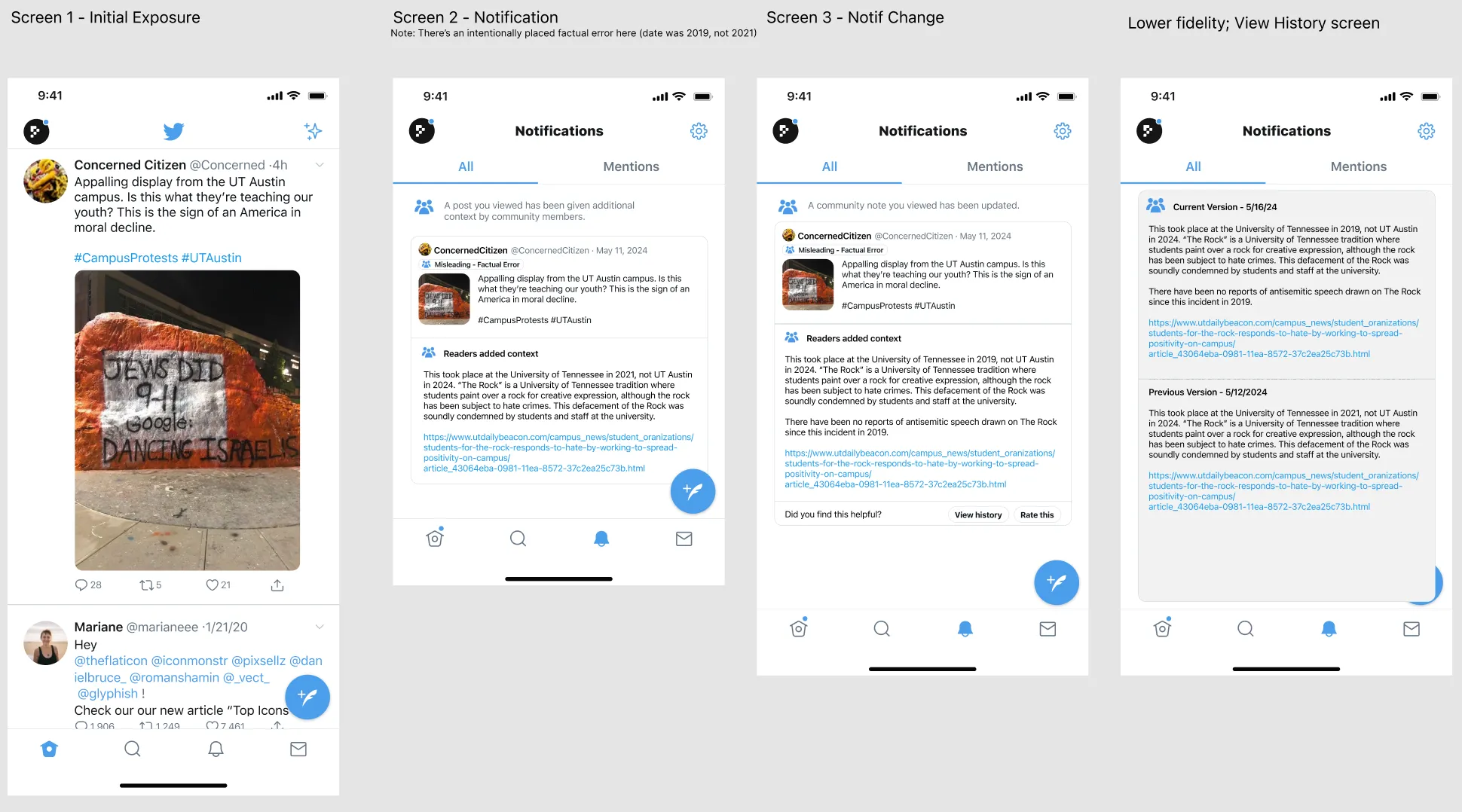

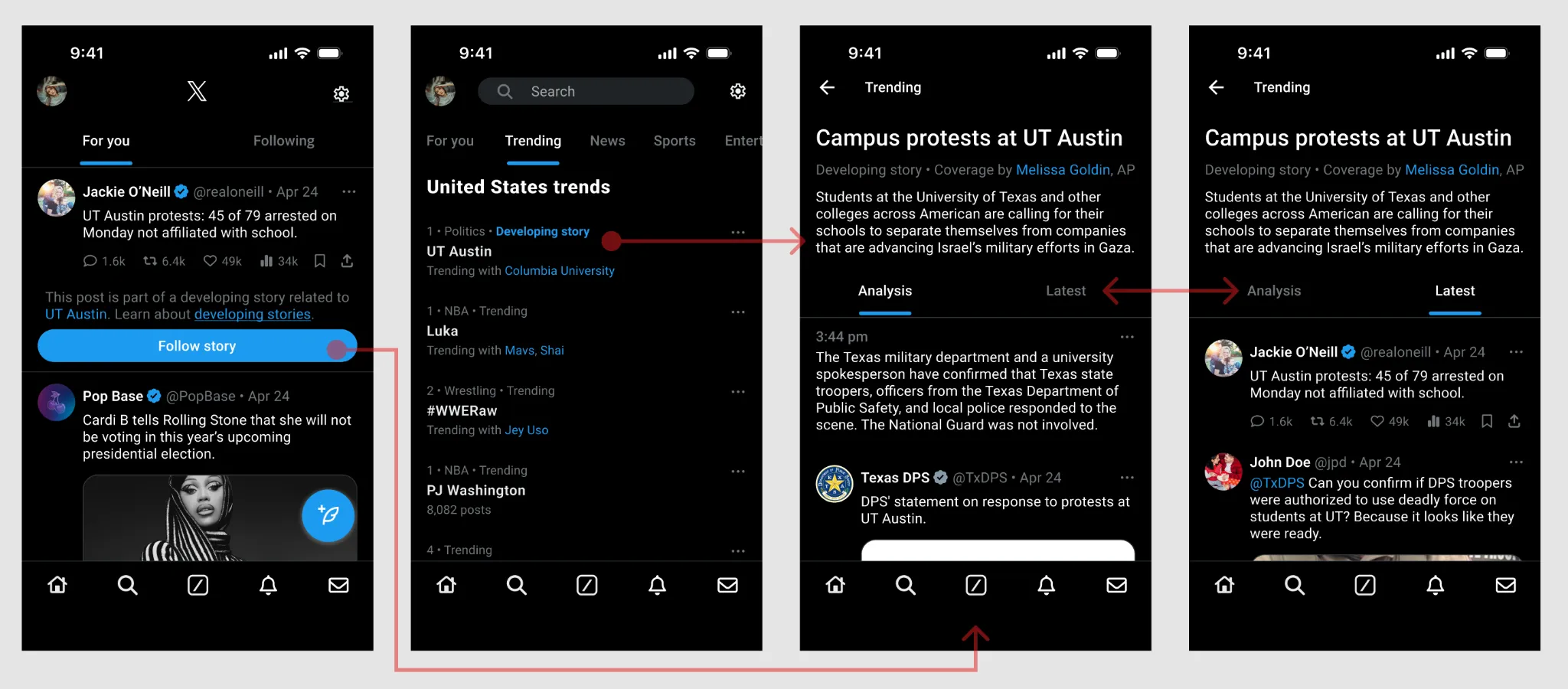

- Community Notes Notifications — correct after the fact: notify a user when a post they engaged with later receives community context.

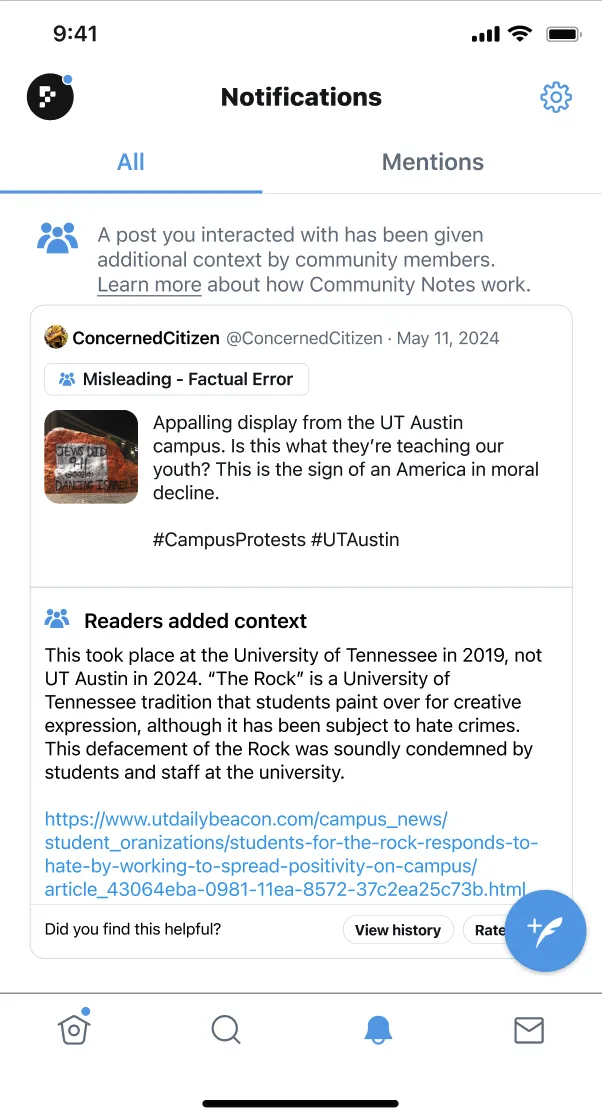

- Quarantine — contain at the moment of spread: withhold a flagged post from algorithmic feeds while it is under review.

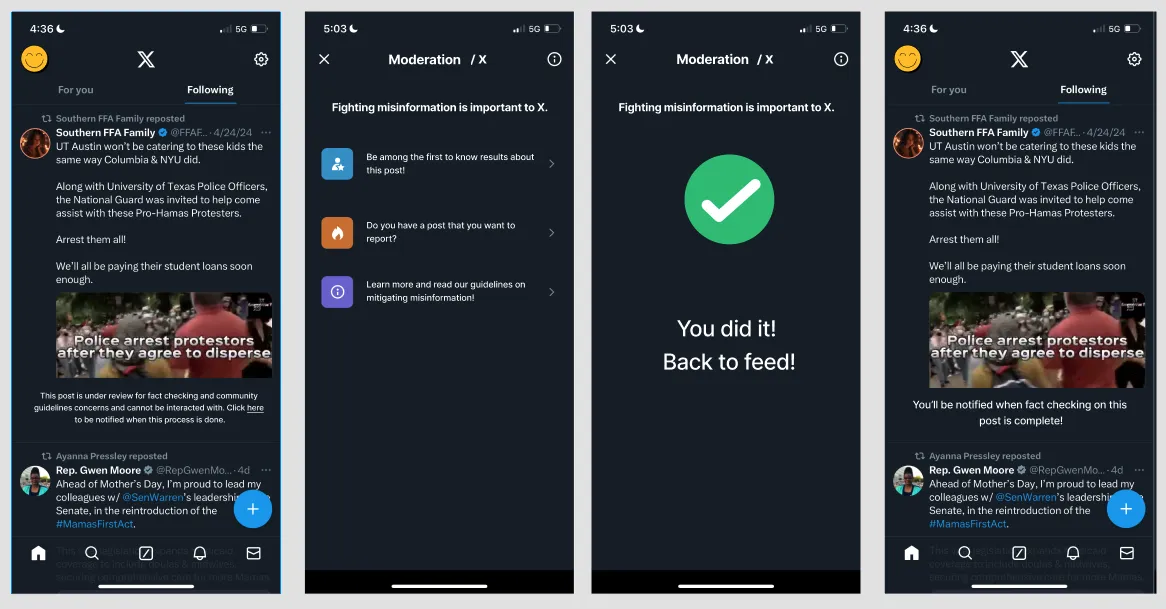

- Developing Story Tracker — contextualize before exposure: a journalist-curated layer over a trending topic.

Findings

We tested all three with five participants in think-aloud sessions, evaluating each against five design values — free speech, reducing bias, transparency, societal impact, and user effort. What we learned, concept by concept:

Community Notes Notifications — the most uniformly well-received

"I would appreciate being notified if something I read is factually incorrect or has other errors because oftentimes I bring this up in discussion and it would be embarrassing to be wrong."

We narrowed the trigger from "viewed" to "interacted with" — liked, reposted, or replied. For note updates we set a higher bar: only evolving guidance from verifiable, authoritative sources, not evolving rumors. And we linked directly to X's Community Notes documentation, lowering the friction for people who want to understand the system before trusting it.

Users saw it as an augmentation of how they already use X: keeping up with evolving stories, and not spreading things they later learn are wrong. The concern was overload — if simply viewing a post could trigger a notification, users feared being flooded. A secondary finding: people held wildly different mental models of how Community Notes works, and those who didn't understand it were skeptical of its corrections regardless of content.

Quarantine — understood, but it concentrated power

"I think this could be good but yea…screenshots. I feel like it's almost a challenge for them like 'oh you don't want me to share this I'm gonna prove you wrong and share it'…they really think the radical Left is out to get them."

We restricted quarantine initiation to the platform's moderation team — users can flag a post, but only moderators can quarantine it — and scoped it to content with potential for immediate harm rather than as a general tool. The removal flow was redesigned for transparency: people who followed an account could still see removed content in a dedicated moderation view.

Users grasped the appeal — it targets the infrastructure of spread rather than requiring active user behavior — but raised real concerns about free speech, bias, and adversarial use. They also worried the reporting mechanism itself could be weaponized — turned against queer communities, artists, activists, and small businesses.

Developing Story Tracker — the warmest reception

"I would use this as a reference when debating my family during stuff in group texts. My parents are smart but sometimes they share and believe crazy sh*t. This could automate it for me to send them here."

We clarified the entry-point language ("Follow Story" was confusing next to X's existing "follow"), added a Share button so the curated view could be sent directly to others — turning it into a personal debunking tool — and recommended X adopt a journalist-selection policy that prioritizes outlets with minimal perceived political lean.

This concept fit most naturally into existing behavior. People already use X to follow breaking news, and the tracker streamlines that without forcing a change. The contested point was the journalist-curated "Analysis" tab being shown by default — some read it as the platform asserting an editorial view, "narrating rather than having me research for myself."

Three principles

Across the three concepts, three principles held — and they're what I'd carry into any moderation work:

Agency distribution determines reception. Quarantine was the most technically aggressive intervention and drew the most resistance — not because users disagreed with the goal, but because it concentrated authority in the platform. The concepts that distributed agency across communities, journalists, and users were better received. Interventions that augment user agency beat those that replace it.

Notification design is misinformation design. Even for users who valued staying corrected, the prospect of overload was enough to undermine trust in the system. An effective correction that users learn to ignore is no better than no correction.

Mental models shape trust as much as the intervention. People who understood how Community Notes works trusted its corrections; those who didn't were skeptical regardless of the content. Designing effective moderation also means designing for comprehension of the moderation system — the two can't be separated.

Process

We used a six-stage, values-centered process: literature review → success criteria → ideation → medium-fidelity mockups → user testing → refinement. I contributed to every stage — literature synthesis, ideation and concept scoring, Figma prototyping, running sessions, analysis, and the final report.

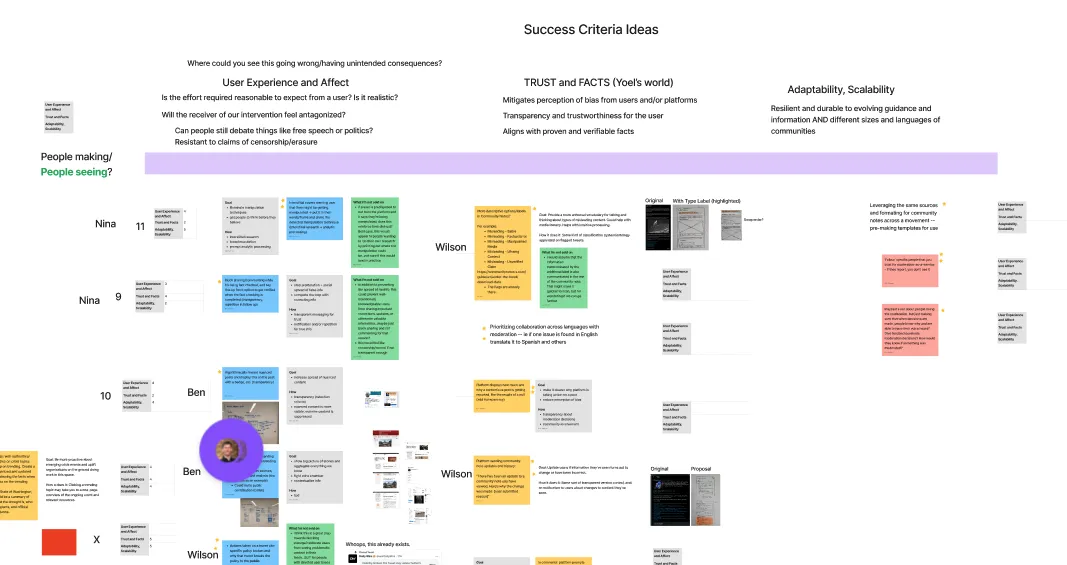

Success criteria and values

The literature surfaced three initial criteria — user experience and affect, trust and facts, adaptability and scalability — which we translated into five concrete, discussable design values: free speech, reducing bias, transparency, societal impact, and user effort. Those values structured both our concept scoring and our test questions.

From six concepts to three

We brainstormed against the criteria and developed six ideas in sketch form: a Community Notes extension, more descriptive Community Notes labels, an interstitial manipulation warning, blocking shares of posts under review, badge-rewarding nuanced posts, and journalist-curated story tracking. After scoring and discussion we combined the first two and carried three forward — Community Notes Notifications, Quarantine, and the Developing Story Tracker — each mapping to a distinct theory of change: correct retroactively, contain at spread, contextualize before exposure.

User testing

We recruited five participants with varied backgrounds and X-usage patterns — a software engineer, a Latino man from Texas with medium-to-high usage, an urban planner in DC, a medical researcher in Seattle, and a Seattle PhD student — and walked each through all three medium-fidelity Figma prototypes, evaluating against the five values and probing mental models. We acknowledge the sample skews educated, urban, and left-leaning — a real limitation for politically charged moderation research.

Reflection

The biggest limitation is sample diversity. Five educated, urbanite participants, tested on a politically complex but not explicitly partisan claim, leaves real uncertainty about how these interventions would land with users who hold strong partisan identities or treat "free speech" as a value above accuracy — the population most likely to push back on moderation, and a meaningful share of X's base. Future work should prioritize recruiting across political identity.

The medium-fidelity, non-interactive prototypes also limit ecological validity. Hitting a Quarantine notice while mindlessly scrolling is different from examining it attentively in a research session; higher-fidelity testing inside a simulated X session would surface different reactions.

What worked: the values-centered framework genuinely structured both ideation and testing, the real debunked claim grounded the sessions, and discovering that each concept maps to a distinct theory of change — correct, contain, contextualize — gave us a vocabulary for recommending when to deploy which.